|

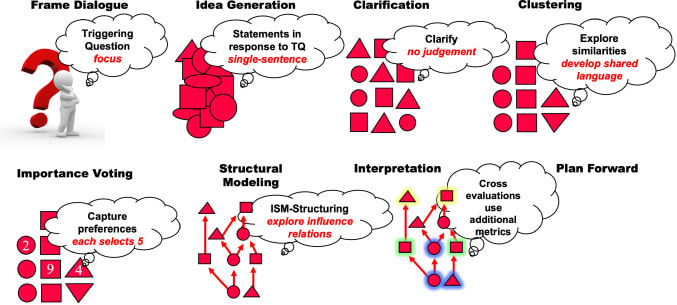

If you have ever gone out to lunch with a large group of people, you may have experienced how challenging it is for a group of people to make a group decision. Maybe your colleagues and friends are different, but for me, it can be a real pain in the neck to find a lunch destination that everyone is happy to visit. Consensus-building methods for formal settings loosely applicable to a group lunch destination decision exist that are more appropriate for complex issues. Two group decision-making models are the Delphi method and the Structured Design (Dialogic) (SDD) method. Both group decision-making methods ensure that group decisions are objective. In addition, both ways strive to include introverts, balance contributions from extroverts, and have all perspectives. The ideal results of each method include group decisions with holistic viewpoints rather than decisions based on who was the loudest or more senior person in a group. The strategy should reflect the issue's complexity, so I would prefer to use SDD over Delphi for complex problems. The Delphi Method begins with a series of open-ended questions about the decisions to be made. Participants then discuss the answers until a consensus is reached (Sekayi & Kennedy, 2017). For example, in our lunch destination scenario, participants may begin by asking if anyone has any food allergies or specific types of food that are strictly off-limits. The group can then eliminate some destinations to narrow down the lunch destination. Each person provides information about their preferences and slowly whittle down potential destinations. Unfortunately, this technique may still "out-vote" one or more person's preferences and rely on a majority vote, leaving someone less vocal stuck eating Thai food when they hate Thai food. Alternatively, Structured Design (Dialogic) (or Structured Dialogical Design) (SDD) may be more appropriate for complicated strategies and plans. In SDD, the persons most concerned or affected by the issue(s) are the primary participants. Triggering questions (TQ) focus the decision, followed by statements responding to the TQs. Next, further clarification narrows outcomes, and the group then comes up with comparison and contrasting options (e.g., clustering). Following the grouping of choices, the participants select their preferences and explore their relations. Finally, interpretation of those relationships occurs, and the group plans to move forward (Laouris & Romm, 2021). I would not want to use SDD to develop a lunch destination; however, a verbal version may be used to ensure that all stakeholders' interests are taken into consideration. The similarities between the Delphi method and SDD are that both ways use framing or, as Laouris and Romm describe it, focus on the problem or issue of interest. They also involve a discussion of participants and aim to eliminate less desired options. The two methods differ most drastically in the analysis and proper selection of group preferences and a deeper exploration of potential solutions that occurs in SDD. SDD aims to explore as many relationships between the group decision choice and build consensus through metrics analysis and choice relationship analysis.

In the case of SDD, it would not make a very effective method for determining a trivial group decision-making method because it requires extensive analysis. The Delphi method or a modified version works best for a more rapid decision-making process. The SDD method value is more inherent with groups such as Think Tanks, business strategy planning, or scenario planning for organizations. For now, I think I'll stick with conceding to eat at a Thai restaurant when lunching with a group instead of turning the decision-making process into an academic exercise. Unless you're into that sort of thing? Happy trails! References Sekayi, D., & Kennedy, A. (2017). Qualitative delphi method: A four round process with a worked example. The Qualitative Report, 22(10), 2755-2763. Yiannis, Laouris, Norma RA Romm. (2021). Structured dialogical design as a problem structuring method illustrated in a Re-invent democracy project. European Journal of Operational Research, 2021, ISSN 0377-2217, https://doi.org/10.1016/j.ejor.2021.11.046.

0 Comments

This year's Holiday Hack Challenge was just as invigorating and challenging event as usual. Santa and the Elves decided that due to all the cyber shenanigans which have occurred over the years that holding a hacking conference would be a great way to keep everyone safe. The name of this conference is KringleCon and it involved an entire virtual world, complete with a virtual character who can move around and interact with CranberryPi terminals, as well as ventilation shafts, kiosks and door code panels (Figure 1). This challenge was tough as it required knowledge of a vast spectrum of knowledge in computer administration, web services, code cracking, and web servers. Additionally, skills in programming and debugging in languages such as JavaScript, PowerShell, Python, shell scripting, and data analysis. While I have decent amount of knowledge with many of these skills, I wasn't going to be able to complete the challenge in time to submit a report to the Counter Hack team by January 14, 2019 due to a later start than last year. So I recruited a good friend of mine whom I've worked with in the past, who is probably one of the most skilled computer experts and programmers I know. My friend and I worked over the last few days to finish the last four challenges/objectives to submit a report before the deadline. The result is approximately 81 pages (a few typos and errors but nothing serious) for our report describing how to finish the 2018 SANS Holiday Hack Challenge. Below is our report embedded for review. Overall, I found the competition very different from the 2017 version and as it was more of an open-world one the flow of the contest was a bit confusing until I spent a good chunk of time (about an hour) of really exploring and understanding the world, and how we could really navigate around and interact in any order a competitor chooses. This proved to be a really fantastic choice by the Counter Hack team, and upon completing the competition with a solid tying together, I'm pleased to say this was an even better experience than last year. I'm continuously humbled by the organization, skills, and efforts of Ed Skoudis and his team. As usual it was a fun experience and the team did a phenomenal job. I'd like to extend a personal acknowledgement to Jevan Gray for his ability to quickly understand and dissect code and assemble solutions. I doubt I could have accomplished this before the deadline without his knowledge and expertise. Thank you again Jevan! Introduction

Recently I volunteered and became a CyberPatriot mentor. In this program I work with local area high school students interested in cyber security who compete in cyber security competitions. Side note: If you work in cyber security, please consider being a mentor; there are perks and we need more STEM/cyber interest, especially from young women. One of the more challenging aspects of competitions for these groups, and most administrators, is discovering how a system you are given may be incorrectly configured. Some examples include discovering if telnet is running, no firewall enabled, a blank password on an administrator account, or a root/root username/password on Linux. In most cases, the competitions use a plethora of operating systems very much like most organizations (e.g. Windows 7, Windows 10, Ubuntu 14, Ubuntu 16, OSX) The Challenge In my previous experience, there are a lot of programs out there that automate this process. I began researching products I use, or know of and have used before to share and demonstrate. This is when I learned, that over the past 5 years the automated vulnerability configuration landscape has drastically changed from an open and free one, to a cloud-based, payed one. This proved to be more of a challenge than I anticipated requiring much more time than I allotted. I had to adjust my requirements for products which are here:

I scoured NIST's Computer Resource Security Center (CRSC) website to find products which were Security Content Automation Protocol (SCAP) compliant. SCAP is a method for using standards to automate system configuration checks/compliance. Cyber security software providers want to be SCAP compliant for Government compliance. It also helps in the private sector too. SCAP compliance uses the former OVAL-based standards or the newer XCCDF checklist format to assess system configurations. A tool that is SCAP compliant is going to be easier for students to learn how to use. Unfortunately, as you'll see, I didn't find a single tool. Tools, not a tool In this case, students take a recommended configuration checklist using XML-based evaluation standards (OVAL, XCCDF) and use the file/list with an evaluation tool/program which outputs results showing the difference. The problem is a lot of the tools out there are either no longer free, or, they only work for specific operating systems. This means now having to use not one tool, but two. Note: OpenVAS is a good tool for this, but it is complicated and difficult to learn and use as it has many more features outside of configuration analysis. The Tools I ended up choosing were

As the tools above show, I did find some tools. But there is not one tool. I ended up discovering there were many available but that there wasn't a single inexpensive, or free tool which could help these students assessments during cyber security competitions. I think as young persons learning cyber security, the industry is doing a disservice by not providing readily available tools for them to practice with and to learn to use. Cyber security is tough. There is a lot of overwhelming content, vulnerabilities, system specific caveats, configuration data, network knowledge, and programming information a person has to learn while also trying to do it easily. I hoped there would be an easy way for these students learn I have is that an automated way to review hundreds or thousands of configuration settings Contrary to popular belief, Grandma is pretty savvy when it comes to cybersecurity; afterall, she has had to put up with Grandpa's antics her whole life. There are a few headlines stating Baby Boomers are more savvy than millennials in this area, but I'm not sure that has anything to do with cybersecurity. In reality, cybersecurity mirrors personal security, and personal safety. Does Grandma typically share her personal information? No, she's probably learned that lesson somewhere over the years. Does she jump on every "too good to be true" bargain (Nigerian Prince scams) she finds in an email? Nope, she's learned that lesson too. So what makes Grandma a perfect case study for cybersecurity?

Well, we love Grandma, and she doesn't get a lot of credit in this area, but Grandmas are special ladies and they can teach us a lot. They glue the family together, and communicate well (I'm generalizing here), and they willingly share life lessons in a compassionate way. Yet all these "new fangled" complex words in the computer world don't match up 1:1 so we can easily apply the lessons to cyber-land. Here are six "lessons" from Grandma, translated into cybersecurity terminology to help us understand WTH (What The Heck) it means today.

|

AuthorI am a Doctoral Scholar at Colorado Technical University and a graduate of the Cyber Security Operations and Leadership program from the University of San Diego. I work in cybersecurity, and have accumulated twenty years in the IT industry. There are few IT roles I have not performed, which gives me great insights into making sense of all the IT confusion. Archives

February 2022

Categories

All

|

Cybersecurity and Information Security Resources

- Home

-

Expertise

- Cryptography >

- Cyber Security Fundamentals

- Cyber Threat Intelligence >

- Incident Response and Computer Network Forensics >

- Operational Policy >

- Management and Cyber Security >

- Security Architecture >

- Secure Software Design and Development >

- Network Visualization and Vulnerability Detection >

- Risk Management >

- Contact

- Blog

- Reference Link Library

RSS Feed

RSS Feed