|

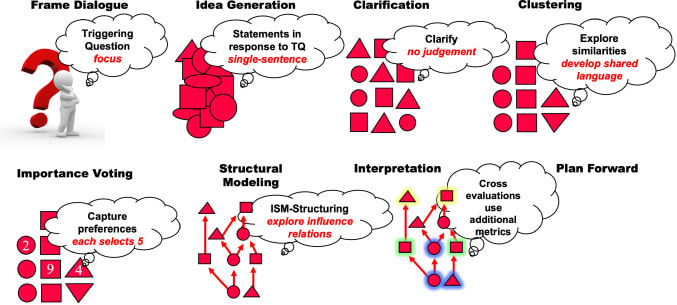

If you have ever gone out to lunch with a large group of people, you may have experienced how challenging it is for a group of people to make a group decision. Maybe your colleagues and friends are different, but for me, it can be a real pain in the neck to find a lunch destination that everyone is happy to visit. Consensus-building methods for formal settings loosely applicable to a group lunch destination decision exist that are more appropriate for complex issues. Two group decision-making models are the Delphi method and the Structured Design (Dialogic) (SDD) method. Both group decision-making methods ensure that group decisions are objective. In addition, both ways strive to include introverts, balance contributions from extroverts, and have all perspectives. The ideal results of each method include group decisions with holistic viewpoints rather than decisions based on who was the loudest or more senior person in a group. The strategy should reflect the issue's complexity, so I would prefer to use SDD over Delphi for complex problems. The Delphi Method begins with a series of open-ended questions about the decisions to be made. Participants then discuss the answers until a consensus is reached (Sekayi & Kennedy, 2017). For example, in our lunch destination scenario, participants may begin by asking if anyone has any food allergies or specific types of food that are strictly off-limits. The group can then eliminate some destinations to narrow down the lunch destination. Each person provides information about their preferences and slowly whittle down potential destinations. Unfortunately, this technique may still "out-vote" one or more person's preferences and rely on a majority vote, leaving someone less vocal stuck eating Thai food when they hate Thai food. Alternatively, Structured Design (Dialogic) (or Structured Dialogical Design) (SDD) may be more appropriate for complicated strategies and plans. In SDD, the persons most concerned or affected by the issue(s) are the primary participants. Triggering questions (TQ) focus the decision, followed by statements responding to the TQs. Next, further clarification narrows outcomes, and the group then comes up with comparison and contrasting options (e.g., clustering). Following the grouping of choices, the participants select their preferences and explore their relations. Finally, interpretation of those relationships occurs, and the group plans to move forward (Laouris & Romm, 2021). I would not want to use SDD to develop a lunch destination; however, a verbal version may be used to ensure that all stakeholders' interests are taken into consideration. The similarities between the Delphi method and SDD are that both ways use framing or, as Laouris and Romm describe it, focus on the problem or issue of interest. They also involve a discussion of participants and aim to eliminate less desired options. The two methods differ most drastically in the analysis and proper selection of group preferences and a deeper exploration of potential solutions that occurs in SDD. SDD aims to explore as many relationships between the group decision choice and build consensus through metrics analysis and choice relationship analysis.

In the case of SDD, it would not make a very effective method for determining a trivial group decision-making method because it requires extensive analysis. The Delphi method or a modified version works best for a more rapid decision-making process. The SDD method value is more inherent with groups such as Think Tanks, business strategy planning, or scenario planning for organizations. For now, I think I'll stick with conceding to eat at a Thai restaurant when lunching with a group instead of turning the decision-making process into an academic exercise. Unless you're into that sort of thing? Happy trails! References Sekayi, D., & Kennedy, A. (2017). Qualitative delphi method: A four round process with a worked example. The Qualitative Report, 22(10), 2755-2763. Yiannis, Laouris, Norma RA Romm. (2021). Structured dialogical design as a problem structuring method illustrated in a Re-invent democracy project. European Journal of Operational Research, 2021, ISSN 0377-2217, https://doi.org/10.1016/j.ejor.2021.11.046.

0 Comments

Information security is a challenging field for organizations made more difficult because it is prone to the constant change in information technology. Higher education institutions thrive with open access to information and collaboration. Yet, higher education institutions also store and process sensitive data such as student records, student health records, credit card data, and State and Federal government data. An essential aspect of information security professionals' roles is to know what types of data they have and where data is located. However, a technological trend identified by the 2021 EDUCAUSE Horizon Report: Information Security Edition identified expanding and borderless network boundaries (EDUCAUSE, 2021). Networks without well-defined borders are complicated for information security teams to protect. As organizations migrate data to cloud-hosted solutions and data is accessed by organizational users, devices not managed by the organization could access that data. For example, personal and mobile devices may access cloud-hosted and controlled data stored or processed across devices subject to different laws, regulations, and endpoint security access controls. Information security teams will need to identify and monitor a device accessing their data and ensure that it is free from malicious software and can safely access sensitive data. The societal and cultural trends amplified by the remote work lifestyles to accommodate COVID-19 safety protocols have compounded information security challenges. Information security teams can detect and respond to security incidents on endpoint devices such as computers, tablets, and other mobile devices as long as end-users comply with security requirements on personal devices. For example, end-users will need to install endpoint protection software and maintain their devices with security updates. However, users today want the convenience of access to data and, though aware, may not be as vigilant about information security practices. The Horizon Report identified the deployment of cloud-based endpoint protection platforms (EPPs) as an important technological trend to manage the security of organizational data. EPP will be required to provide information security as users continue to access data from personal and public networks due to COVID safety protocols and the convenience of remote work. COVID and cultural trends pushed network boundaries out of organizational-controlled network infrastructure and into a wider borderless networked world. An example of the increased number of remote students based on a survey performed by the National Center for Education Statistics identified 7,313,623 students enrolled in distance education courses from post-secondary institutions in 2019 (Institute of Education Sciences, 2015).

To maintain the visibility of mobile and remote devices, information security teams must have a window into the network security and device security posture to identify potential abuse and breaches. Higher education information security teams can use EPP provided to end-users to monitor these remote systems accessing organizational data. The organizations will have to work through legal and licensing challenges with vendors that may complicate software deployment of organizational purchased software to personally owned devices. In addition, software must remain easy to deploy, maintain, and use to ensure that convenient access to data is not subject to technological barriers and thus decrease the chances of user adoption and use. In my past role on a higher education information security team, the organization was able to work with vendors to provide EPP to students, faculty, staff, and affiliates without a significant increase in product cost. In addition, open and transparent participation and communication with faculty, staff, students, and affiliates to develop policy that specified how organizational software on personal devices could and would be used improved willingness to install and comply with information security requirements. Even though the network boundaries are shifting and appear borderless, higher education organizations will find that they can benefit by using EPP that is widely deployed to continue providing information security benefits across the spectrum of devices accessing organizational data. References EDUCAUSE. (2021). Information Security Edition (p. 10). EDUCAUSE 2021. https://library.educause.edu/-/media/files/library/2021/2/2021_horizon_report_infosec.pdf?la=en&hash=6F5254070245E2F4234C3FDE6AA1AA00ED7960FB Institute of Education Sciences. (2015). The NCES Fast Facts Tool provides quick answers to many education questions (National Center for Education Statistics). Ed.gov; National Center for Education Statistics. https://nces.ed.gov/fastfacts/display.asp?id=80 In my own words, Computer Forensics is the scientific method of obtaining digital evidence from computing devices while maintaining their integrity and authenticity to ensure digital evidence may be accepted in a court of law. I recently completed a Masters course in Computer Forensics at the University of San Diego after having performed previous computer forensic mentor work with high school students for the Air Force Association's CyberPatriot program and Cal Poly's 2018 California Cyber Innovation Challenge. This field is vast, constantly changing, and much more tedious than any movie or television program portrays. However, working as a computer forensic analyst and discovering a clear and consistent timeline of activities or artifacts which tell a story, makes a lot of the labor worthwhile. This is the exciting part.

Computers and the Internet are tools. Tools can be used as they are intended, or they can be abused. Unfortunately, there's a lot of abuse which occurs which is aided by the use of computers and the Internet. Child exploitation, human traffic, terrorism, financial crimes, or even just plain human rights violations leading to murder, prison or torture. The field of Computer Forensics attempts to aide companies and Governments to provide evidence of these serious crimes by analyzing those systems involved. Some wonderful resources I have found for computer forensics include the following in no particular order:

In 2008 Flo Rida had a song "Low" featuring T-Pain with a line "Boots with da fur" which my family loved belting out whenever we heard this song on the radio. This blog article has nothing to do with getting your club on. Rather, DFIR (Digital Forensics and Incident Response) pronounced Dee-FUR just reminded me of it. DFIR has a significant place in competitions for cybersecurity. Many of the California and National competitions are composed of a large portion of digital forensics, so having the right tools for the job makes all the difference. Some bright students I've been mentoring have asked, "What OS do you recommend for DFIR?" My default response is typically Kali Linux, but I wanted to take a deeper look into SANS' SIFT workstation.

If you found yourself accidentally, or intentionally, reading my earlier blog postings, you'll note that many of these high school and middle school competitions require that the competitors use Open Source and freely available software. This was another reason why this was a great opportunity to revisit and try out SANS' SIFT Workstation. This Ubuntu distribution based on Debian Linux was developed, and is maintained, by a formidable training group, SANS,' very own Rob T Lee. It features automatic updates, and a plethora of free and often updated tools one can use for Digital Forensics and Incident Response. Learning to use these tools is for other blog postings, this blog discusses getting the most recent version of a great DFIR tool installed and working on this OS build. There are a lot of DFIR tools and Operating Systems available for use A small sampling of them includes Linux

Get your SIFTv3 workstation image/installation and update/upgrade it I'll assume you're familiar with obtaining a virtual image of SIFT and getting it installed. I'll use VirtualBox for my blog, as it's free and feature-rich. You can download a pre-configured SIFT workstation as a Virtual Machine in OVA format at https://digital-forensics.sans.org/community/downloads under the section "Download SIFT Workstation VM Appliance". After you get your workstation up and running (there are other tutorials for that) then update it through it's built in update or open a shell/terminal window and type sudo apt-get update && upgrade to get the build to update. After this, there are only a few other steps to install the required Java version to support TSK and Autopsy 4+. Installing testdisk for photorec functionality Note that I did not find this necessary for versions 4.6 or 4.7 of autopsy, however, it can't hurt for you to test. Enter into a shell the command: sudo apt-get install testdisk Installing Java to support TSK/Autopsy 4+ After you've updated the Operating System and verified that you have installed testdisk, check to see if Java's version is 1.8 or higher by typing in the shell/terminal: javac -version Your output should show something like javac 1.8.0_171 . Ensure it starts with at least version 1.8.0. If not, then add Java's repository to update sources by typing the command: sudo add-apt-repository ppa:webupd8team/java then update your local repository with command: sudo apt-get update and finally install the Java 8 installer with the command: sudo apt-get install oracle-java8-installer When installation completes (it may take one-five minutes or more) then check your Java version again with command and copy the installation path that appears next to Java version 1.8. javac -version Often times you'll have more than one version of Java installed, so another command is required to check which version is the primary one and also show us the file path where it is located. Check this with the command sudo update-alternatives --config java to show a list of Java installations on the system. Check that 1.8 shows the default/primary version with an * in it's row and copy down the path to Java 1.8 as you'll need to add that path to your environmental variables. Type the command sudo nano /etc/environment to open up the nano text editor, and add the JAVA_HOME line below (with your path) as a new line in the /etc/environment file. *Note that you only need to include the path up to /java-8/oracle. JAVA_HOME="/usr/lib/jvm/java-8-oracle" Press CTRL+X to exit, and when asked save the changes. Reload your environmental variables with command source /etc/environment then verify the variable shows the correct path by running the command echo $JAVA_HOME. *Note: These installation instructions comes from the article https://medium.com/coderscorner/installing-oracle-java-8-in-ubuntu-16-10-845507b13343 which you can visit for more details. Getting and Installing the latest version of The Sleuth Kit and Autopsy Visit https://github.com/sleuthkit/sleuthkit/releases and download the latest version of the .deb package, typically named something like sleuthkit-java_4.6.1-1_amd64.deb. Then install this Debian package of sleuth kit by running the command sudo apt install ./sleuthkit-java_4.6.1-1_amd64.deb. Now that we have the latest version of The Sleuth Kit, go download the latest version of Autopsy. It will typically be released in a zip file at https://github.com/sleuthkit/autopsy/releases and be named something like autopsy-4.7.0.zip. Create a directory for Autopsy wherever you prefer mkdir autopsy-4.7.0 and then unzip the file you downloaded there unzip autopsy-4.7.0.zip Finally, run the setup script in that directory sudo sh unix_setup.sh If everything is in order, you'll have a working version of Autopsy and The Sleuth Kit. You may run Autopsy by changing to the ‘bin’ directory in the autopsy folder you created and then typing the command ./autopsy Summary With a recent working version of The Sleuth Kit and Autopsy on SIFTv3 you have many of the tools competitions require for DFIR tasks. There are many things you won't be able to do without additional work, for example extracting specific registry keys and values from forensic images or other tasks. Fortunately Autopsy includes a modular framework which supports both Java and Python, and the community has created many of these tools for us check these out at https://wiki.sleuthkit.org/index.php?title=Autopsy_3rd_Party_Modules Today's digital forensic investigators benefit greatly by the ease with which the tools and maintenance of them can be done by an opearting system such as SANS' SIFT Workstation. Combined with great open source tools like The Sleuth Kit and Autopsy the hardest part is now learning how to investigate. For example discovering where you need to look, and what information you need to look for as well as how to get it. I found one great resource shared by a student at Cal Poly's California Cyber Innovation Challenge website which discusses analyzing Windows 7-10 and Android operating systems. The papers here contain great tutorials and basic training. Visit the Cal Poly California Cyber Innovation Challenge site and review their awesome site and the downloadable material at https://cci.calpoly.edu/events/ccichttps://cci.calpoly.edu/events/ccic/2018-df-downloads Happy hunting and many thanks to Cal Poly, The Sleuth Kit and Autopsy developers and SANS Digital Forensics! Introduction

Recently I volunteered and became a CyberPatriot mentor. In this program I work with local area high school students interested in cyber security who compete in cyber security competitions. Side note: If you work in cyber security, please consider being a mentor; there are perks and we need more STEM/cyber interest, especially from young women. One of the more challenging aspects of competitions for these groups, and most administrators, is discovering how a system you are given may be incorrectly configured. Some examples include discovering if telnet is running, no firewall enabled, a blank password on an administrator account, or a root/root username/password on Linux. In most cases, the competitions use a plethora of operating systems very much like most organizations (e.g. Windows 7, Windows 10, Ubuntu 14, Ubuntu 16, OSX) The Challenge In my previous experience, there are a lot of programs out there that automate this process. I began researching products I use, or know of and have used before to share and demonstrate. This is when I learned, that over the past 5 years the automated vulnerability configuration landscape has drastically changed from an open and free one, to a cloud-based, payed one. This proved to be more of a challenge than I anticipated requiring much more time than I allotted. I had to adjust my requirements for products which are here:

I scoured NIST's Computer Resource Security Center (CRSC) website to find products which were Security Content Automation Protocol (SCAP) compliant. SCAP is a method for using standards to automate system configuration checks/compliance. Cyber security software providers want to be SCAP compliant for Government compliance. It also helps in the private sector too. SCAP compliance uses the former OVAL-based standards or the newer XCCDF checklist format to assess system configurations. A tool that is SCAP compliant is going to be easier for students to learn how to use. Unfortunately, as you'll see, I didn't find a single tool. Tools, not a tool In this case, students take a recommended configuration checklist using XML-based evaluation standards (OVAL, XCCDF) and use the file/list with an evaluation tool/program which outputs results showing the difference. The problem is a lot of the tools out there are either no longer free, or, they only work for specific operating systems. This means now having to use not one tool, but two. Note: OpenVAS is a good tool for this, but it is complicated and difficult to learn and use as it has many more features outside of configuration analysis. The Tools I ended up choosing were

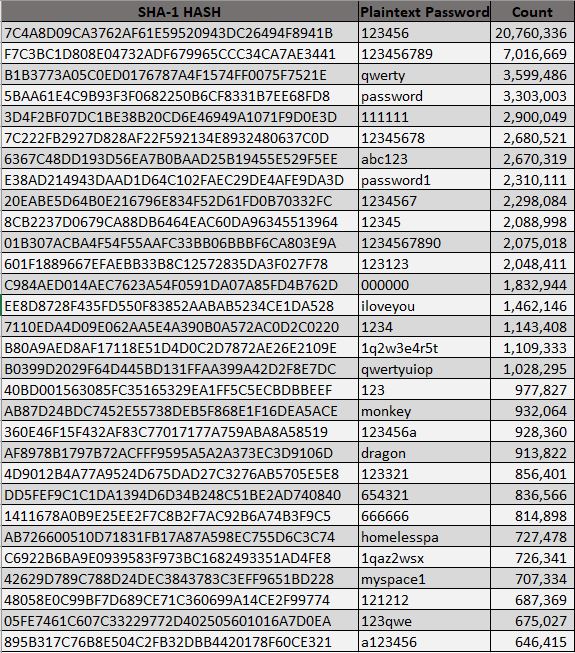

As the tools above show, I did find some tools. But there is not one tool. I ended up discovering there were many available but that there wasn't a single inexpensive, or free tool which could help these students assessments during cyber security competitions. I think as young persons learning cyber security, the industry is doing a disservice by not providing readily available tools for them to practice with and to learn to use. Cyber security is tough. There is a lot of overwhelming content, vulnerabilities, system specific caveats, configuration data, network knowledge, and programming information a person has to learn while also trying to do it easily. I hoped there would be an easy way for these students learn I have is that an automated way to review hundreds or thousands of configuration settings Here I present the top 30 passwords taken from Troy Hunts haveibeenpwned.com/passwords website where he provides a great tool and resource to verify if your password is in a breach disclosed to Have I Been Pwned. He also released the SHA-1 hashes of over half a billion disclosed passwords to be downloaded for our own pleasure. From this I did a quick analysis.

Note, Troy did not release the plaintext passwords as some had sensitive materials; thus there was a little searching to convert these, but fortunately for us, or unfortunately depending on how you look at it, these were easy to Google/convert (e.g. put the hash into Google and find a match). It's no surprise that number sequences and keyboard patterns make up the bulk of the list. One outlier was "myspace1" which was identified 707,334 times in breach disclosures. This is likely due to the breach of passwords from the website myspace.com and users using "myspace1" to meet minimum password requirements. The infamous "password", "password1", "iloveyou", "monkey", and "dragon" appear in the top 30. People love password monkey dragons! Enjoy! In this document I analyze the Windows NT Architecture and it's implementation of the Security Reference Monitor model. It identifies gaps and strengths in the implementation from Windows 2000 through Windows 10 and Windows Server 2012R2. The bottom line is, Windows NT is a complex, flexible, robust system, however, it also supports so many capabilities that a simple security model and implementing basic security reference model principles hinder true secure operations. The Operating System can, of course, be hardened, however, the basic structure presents challenges which would need many other systems to provide compensating security controls as well as mitigations and detective controls. I'll still use Windows, however, a complete redesign of their security reference monitor implementation may be required to provide a secure, auditable, and tamper-resistant operating system. This document was created for an assignment in my Cyber Security Operations and Leadership program at the University of San Diego. |

AuthorI am a Doctoral Scholar at Colorado Technical University and a graduate of the Cyber Security Operations and Leadership program from the University of San Diego. I work in cybersecurity, and have accumulated twenty years in the IT industry. There are few IT roles I have not performed, which gives me great insights into making sense of all the IT confusion. Archives

February 2022

Categories

All

|

Cybersecurity and Information Security Resources

- Home

-

Expertise

- Cryptography >

- Cyber Security Fundamentals

- Cyber Threat Intelligence >

- Incident Response and Computer Network Forensics >

- Operational Policy >

- Management and Cyber Security >

- Security Architecture >

- Secure Software Design and Development >

- Network Visualization and Vulnerability Detection >

- Risk Management >

- Contact

- Blog

- Reference Link Library

RSS Feed

RSS Feed